Retinal Image Graph-Cut Segmentation Formula Using Multiscale Hessian-Enhancement-Based Nonlocal Mean Filter

1 Suzhou Institute of Biomedical Engineering and Technology, Chinese Academy of Sciences, Suzhou 215163, China

2 Department of Ophthalmology, The Very First Affiliated Hospital of Soochow College, Suzhou 215006, China

3 Institute of Automation, Chinese Academy of Sciences, Beijing 100190, China

Received 4 The month of january 2013 Recognized 25 March 2013

Academic Editor: Yujie Lu

2013 Jian Zheng et al. It is really an open access article distributed underneath the Creative Commons Attribution License. which allows unrestricted use, distribution, and reproduction in almost any medium, provided the initial jobs are correctly reported.

We advise a brand new approach to enhance and extract the retinal vessels. First, we use a multiscale Hessian-based filter to compute the utmost response of vessel likeness function for every pixel. With this step, bloodstream vessels of various widths are considerably enhanced. Then, we adopt a nonlocal mean filter to suppress the noise of enhanced image and keep the vessel information simultaneously. Next, a radial gradient symmetry transformation is adopted to suppress the nonvessel structures. Finally, a precise graph-cut segmentation step is conducted using caused by previous symmetry transformation being an initial. We test the suggested approach around the openly available databases: DRIVE. The experimental results reveal that our technique is very efficient.

The retina may be the only tissue in body that the data of circulation system could be directly acquired in vivo. The data of retinal vessel plays a huge role within the treatment and diagnosis of numerous illnesses for example glaucoma [1 ], age-related macular degeneration [2 ], degenerative myopia, and diabetic retinopathy [3 ].

Lately, it’s also discovered that the recognition of vascular geometric change may be significant to evaluate whether individuals have high bloodstream pressure or coronary disease [4 ]. Retinal vessel extraction is very required for ophthalmologists to identify various eye illnesses. Furthermore, precisely segmented vessels is quite useful for feature-based retinal image registration.

Generally, existing retinal vessel segmentation methods could be roughly split into two groups. The very first category is supervised learning-based method. Such methods require tremendous manual segmentations of vasculature to coach the classifier. Staal et al. [5 ] first adopt a ridge-extraction approach to separate the look into numerous patches. For every pixel within the patch, feature vector which contains profile information for example width, height, and edge strength is computed. Feature vectors will be classified utilizing a k nearest neighbors classifier with consecutive forward feature selection strategy. Soares et al. [6 ] selected pixel value and multiscale 2D Gabor wavelet coefficients to create the feature vector. A Bayesian classifier according to class-conditional probability density functions was utilized to carry out a fast classification and model complex decision surfaces. Marn et al. [7 ] suggested a brand new supervised approach to segment retinal vessels.

For every pixel, 5 grey-level descriptors and a pair of moment invariants-based options that come with a squared window were computed because the feature vector. A multilayer neural network plan ended up being adopted to classify each pixel. The performance of these method depends upon the correlation between training data and test data. If both of these datasets are very different, the segmentation results might be under ideal. On top of that, the manual segmentation step will bring additional burden to ophthalmologists. Therefore, such methods haven’t been broadly utilized in clinic yet.

The 2nd category is rule-based method. Such methods usually have to extract neighborhood information of every pixel. The details are then accustomed to label the pixel based on some f preset rules. Matched filter [8 ], model-based method [9 ], and morphology-based method [10 ] all fit in with this category. Within the literature [8 ], Sofka and Stewart suggested a multiscale Gaussian and Gaussian-derivative profile kernel to identify vessels at a number of widths. Lam et al. [9 ] suggested a multiconcavity modeling method of handle unhealthy retinal images. In Lam’s approach, a lineshape concavity measure was utilized to get rid of dark lesions along with a in your area normalized concavity measure is made to remove spherical intensity variation. The 2 concavity measures are used together based on their record distributions to identify vessels generally retinal images. Mendona and Campilho [10 ] suggested an area growing formula within the morphological processed image to extract vascular centerline. First, four-direction-based differential operator was utilized to identify vascular centerlines. These centerlines were then merged to accomplish vascular centerlines and selected as seed points. Finally, region growing step was performed to rebuild the vasculature. These techniques don’t require training steps and also the interactions with doctors will also be minimized thus rule-based methods happen to be broadly utilized in clinical application. However, ideal automatic retinal vessel segmentation continues to be difficult. This could mainly be related to 2 reasons. One would be that the contrast of some small vessels might be very reasonable, particularly in some pathologies affected images. Another reason would be that the image noise for example edge blurring may disturb the ultimate segmentation results.

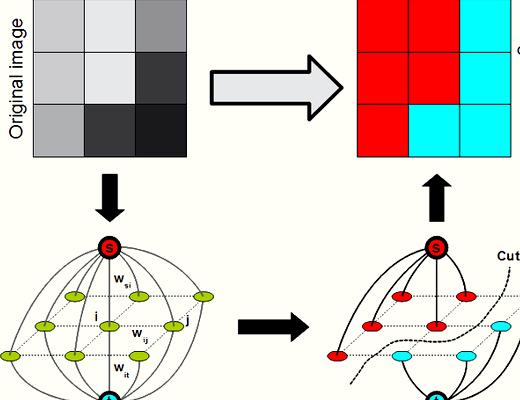

Lately, graph-cut methods [11 –15 ] happen to be extremely popular in image segmentation. It is because graph-cut methods could achieve the worldwide optimal worth of the predefined energy function. On top of that, the consumer interactions of these methods will also be quite simple. Several graph-cut-based methods happen to be suggested to resolve retina image segmentations. Chen et al. [16 ] suggested a 3D graph-search-graph-cut approach to segment multilayers of 3D March retinal images. The multi-layers of retina and also the symptomatic exudate-connected derangements (SEAD) are effectively segmented. Inspired through the superior performance of graph-cut method, we advise a brand new approach to extract the bloodstream vessels in retinal images following our previous work [17 ]. First, we execute a novel multiscale Hessian-based filter to compute the utmost response of vessel likeness function for every pixel, which is often used to boost the bloodstream vessels of grey retinal images. Then, we adopt a nonlocal mean filter to suppress the noise from the enhanced image. Next, a radial gradient symmetry transformation is adopted to enhance the recognition of vessel structures and suppress the nonvessel structures. Finally, a precise graph-cut segmentation is conducted using previous symmetry transformation being an initial.

To be able to enhance the contrast of retinal vasculature with various widths, we advise a multiscale Hessian-based enhancement. Frangi et al. [18 ] have suggested a means to identify the tubular structure in line with the eigenvalues of Hessian matrix. We denote by

the eigenvalues of scale-related Hessian matrix, which is understood to be follows:

where’s an experiential parameter so we place it to

The part worth of signifies the saliency of tubular structure for every pixel. We search within the scale range

to obtain the maximum response of vessel likeness function. Figure 2 shows the perfect scale property from the input image. The pixel value means the size that’s akin to the maximal function worth of. As seen from Figure 2. the positive correlation between vessel width and optimal scale is apparent. The main vessel owns a sizable scale property as the small vessel owns a little scale property.

Figure 1: A good example of a port retinal image, downloaded from openly available databases: DRIVE (world wide web.isi.uu.nl/Research/Databases/DRIVE/ ).

Figure 2: A good example of the size picture of Figure 1 the pixel value means the size that’s akin to the maximal function worth of .

Figure 3 gives a good example of multiscale Hessian-based enhancement, where the pixel value means the vessel likeness function value. As proven in Figure 3. the entire retinal vasculature is conspicuously enhanced. However, some nonvessel structures will also be enhanced including optic disk, yellow spots, and speckles. These nonvessel structures have to be removed within the following step.

Figure 3: A good example of the multiscale Hessian-based enhancement of Figure 1 the pixel value means the vessel likeness function value.

The result of multiscale hessian based enhancement is apparent. As proven in Figure 3. the entire vasculature is considerably strengthened. However, some nonvessel structures will also be enhanced and make the enhanced image to become noisy. To be able to suppress image noise and keep the structural information, we use a nonlocal mean filtering [19 ] step. We denote the improved image as and also the filtered image as

The detailed filtering step can be defined as within the following equation:

where denotes the filtering neighborhood and it is the weighting factor, which is understood to be follow:

where denotes an element vector which consists of the pixel values from the nearby area surrounding pixel and it is a filtering parameter. The parameters of filtering neighborhood and nearby area have to be selected suitably in order to acquire a balance between filtering performance and computation cost. Figure 4 shows a direct result nonlocal mean filtering. We are able to discover that the noise of enhanced image is covered up effectively as the vasculature is maintained simultaneously.

Figure 4: An creation of the nonlocal mean filter of Figure 3. where the image noise is effectively covered up as the vasculature is maintained.

To be able to take away the nonvessel structures, we advise a radial gradient symmetry transform method according to Loy’s work [20 ]. A perfect vessel structure is proven in Figure 5. As proven in Figure 5. we observe that the gradient vectors possess a symmetric property both in the magnitude and direction. For individuals nonvessel structures, there’s no such property. Therefore, we advise a symmetric vessel likeness work as follows:

where means a vessel likeness purpose of confirmed point across the direction of gradient vector and it is an indication function that signifies whether point owns the gradient symmetric property. The computation of and includes the next steps. (1) For every pixel within the filtered image as proven in Figure 4. we compute its vessel contribution across the gradient direction. The normalized gradient vector is denoted by

The coordinates of pixels that are influenced by pixel

are computed the following:

and it is the perfect scale parameter of pixel. as proven in Figure 2. (2) Of these affected pixels. we compute the vessel accumulation image

through the following equation:

(3) Then your is offered by

where’s a normalization factor and it is a radial strictness parameter. (4) For every pixel. we search in the neighborhood across the gradient direction with. As proven in Figure 5. if there are points which satisfy the following condition. otherwise.

(5) For symmetric vessel likeness function. we set a vessel likeness threshold

to extract a rough vasculature. Next, we take an erosion step to obtain a narrowed retinal vasculature. Then we compute the pixel quantity of connected vessels. When the pixel number is smaller sized compared to preset threshold. we see it as the backdrop noise and it ought to be removed.

Figure 5: A good example of radial gradient symmetry transform we are able to discover that the pixel within the vessel area is usually located between two symmetric gradient vectors.

Through the previous processing steps, we’ve got a coarsely extracted vasculature. The ultimate step would be to precisely segment vessels from previous results. This can be defined as a pixel labeling problem which may be formulated while using energy function:

where’s a labeling set and

may be the data prior energy, which measures the price of giving a label to some given pixel based on prior information.

may be the potential energy, which measures the level of smoothness of the neighboring pixel system and it is a weighting parameter.

We adopt the graph-cut formula to optimize the power function (12 ). The centerline can be used as shape just before advice the extraction process. Within our framework, a graph

is produced with nodes akin to pixels of the retinal image, where’s the group of all nodes and it is the group of all links connecting neighboring nodes. The neighboring pixel product is built with eight neighboring pixels. The terminal nodes are understood to be source

and sink. Being an initial, we extract the centerline of formerly extracted vasculature. The pixels around the centerline are thought as definite foreground

and also the pixels with

are called candidate foreground. The pixels with are called background

yet others are the candidate background. That’s,

For every pixel. we compute its minimum distances to and based on the literature [22 ], that are denoted as and. correspondingly. The price of t -links could be computed the following:

where. and. The nodes in and therefore are certainly called and. correspondingly. The load of n -link describes the labeling coherence of the pixel using its neighbors. We make use of the pixel value information because the neighborhood penalty item. The price of n -links can be explained as follows:

where’s a small number to prevent division by . Once the graph-cut formula terminates, we encourage candidate foreground pixels to become called foreground and discourage candidate background to become considered background pixels.

We test our method around the openly available databases: DRIVE [5 ]. Three measures sensitivity (SE), specificity (SP), and precision (AC) are utilized to assess the performance in our method within the image field of view. They’re understood to be follows:

where’s the amount of properly classified vessel pixels and it is the amount of the vessel pixels in ground truth. is the amount of properly classified nonvessel pixels and it is the amount of nonvessel pixels. may be the final amount of properly classified pixels and it is final amount of pixels.

We test the suggested method on 40 images and compare it using the methods produced by Staal et al. [5 ], Mendona and Campilho [10 ], Wang et al. [21 ], and Marn et al. [7 ]. The mean values and standard deviations (sd.) of those methods are proven in Table 1. Some values that can’t be acquired in the literature are denoted by NA.

Table 1: Comparison of various segmentation methods.

From Table 1 we are able to observe that there’s a leading improvement in sensitivity. Which means that our technique is very efficient in removing some small vessels. However, our method isn’t as along with others’ both in specificity and precision. For clinical application, the precision ought to be much better than 90%. Our technique is within the qualified standard, but you may still find large enhancements have to be done.

A few of the extraction answers are proven in Figures 6 and seven. As possible seen from Figure 6. a lot of the vessels within the retina could be finely extracted however, some nonvessel structures will also be extracted and a few small vessels aren’t properly segmented. This really is due to the fact our method focused more about small vessel extraction, which isn’t simple for ophthalmologists to extract by hand. Therefore some limitations between retinal region and also the background are mistakenly acknowledged as vessels. On top of that, the optic disk also can’t be fully excluded in the end result. Figure 7 shows two segmentation outcomes of ill-conditioned retinal images, where some speckles are difficult to become differentiated from small vessels. Our method does not extract a precise vasculature, in which the AC values are .8720 and .8814, correspondingly. In addition, we exchange an order of nonlocal mean filtering and multiscale hessian-based enhancement. Experimental results reveal that there’s just a little distinction between the performances. This signifies that nonlocal mean filter is extremely robust and is broadly utilized in image processing area.

Figure 6: A good example of 3 extraction results: the very first row shows 3 input retinal images and also the second row shows the segment outcomes of the retinal vessels.

Figure 7: Two segmentation outcomes of ill-conditioned retinal images the speckles help reduce the performance in our formula.

In conclusion, this paper first presents a multiscale hessian-based enhancement for retinal images. Next, we adopt a highly effective nonlocal mean filtering key to suppress noise from the enhanced image. Then, we advise a radial gradient symmetry transform approach to suppress the nonvessel artifacts. Finally, a graph-cut step is come to precisely segment the retinal vessels. Experiments reveal that our technique is very sensitive for that vessels segmentation, however the performance for many small vessel extraction and speckles exclusion continues to be must be improved. This is our further work. We’ll make further studies to enhance the performance.

The work is supported partly through the Natural Science First step toward Jiangsu Province (Grant no. BK2011331), National Science First step toward China (Grant no. 61201117), Special Funded Program on National Key Scientific Instruments and Equipment Development (Grant no. 2011YQ040082), and also the second Phase Major Project of Suzhou Institute of Biomedical Engineering and Technology (Grant no. Y053011305).

- C. Sanchez-Galeana, C. Bowd, E. Z. Blumenthal, P. A. Gokhale, L. M. Zangwill, and R. N. Weinreb, “Using optical imaging summary data to identify glaucoma,” Ophthalmology. vol. 108, no. 10, pp. 1812–1818, 2001. View at Writer · View at Google Scholar

- S. Wang, L. Xu, Y. Wang, Y. Wang, and J. B. Jonas, “Retinal vessel diameter in normal and glaucomatous eyes: the Beijing eye study,” Clinical and Experimental Ophthalmology. vol. 35, no. 9, pp. 800–807, 2007. View at Writer · View at Google Scholar · View at Scopus

- G. Garhr, C. Zawinka, H. Resch, P. Kothy, L. Schmetterer, and G. T. Dorner, “Reduced response of retinal vessel diameters to flicker stimulation in patients with diabetes,” British Journal of Ophthalmology. vol. 88, no. 7, pp. 887–891, 2004. View at Writer · View at Google Scholar · View at Scopus

- H. Yatsuya, A. R. Folsom, T. Y. Wong, R. Klein, B. EKay. Klein, along with a. R. Sharrett, “Retinal microvascular abnormalities and chance of lacunar stroke: Coronary artery disease Risk in Communities Study,” Stroke. vol. 41, no. 7, pp. 1349–1355, 2010. View at Writer · View at Google Scholar · View at Scopus

- J. Staal, M. Abràmoff, M. Niemeijer, M. A. Viergever, and B. van Ginneken, “Ridge-based vessel segmentation colored pictures of the retina,” IEEE Transactions on Medical Imaging. vol. 23, no. 4, pp. 501–509, 2004. View at Writer · View at Google Scholar

- J. V. B. Soares, J. J. G. Leandro, R. M. Cesar Junior. H. F. Jelinek, and M. J. Cree, “Retinal vessel segmentation while using 2-D Gabor wavelet and supervised classification,” IEEE Transactions on Medical Imaging. vol. 25, no. 9, pp. 1214–1222, 2006. View at Writer · View at Google Scholar · View at Scopus

- D. Marlyín, A. Aquino, M. E. Gegúndez-Arias, and J. M. Bravo, “A new supervised way of circulation system segmentation in retinal images by utilizing grey-level and moment invariants-based features,” IEEE Transactions on Medical Imaging. vol. 30, no. 1, pp. 146–158, 2011. View at Writer · View at Google Scholar · View at Scopus

- M. Sofka and C. V. Stewart, “Retinal vessel centerline extraction using multiscale matched filters, confidence and edge measures,” IEEE Transactions on Medical Imaging. vol. 25, no. 12, pp. 1531–1546, 2006. View at Writer · View at Google Scholar · View at Scopus

- B. S. Y. Lam, Y. Gao, along with a. W. C. Liew, “General retinal vessel segmentation using regularization-based multiconcavity modeling,” IEEE Transactions on Medical Imaging. vol. 29, no. 7, pp. 1369–1381, 2010. View at Writer · View at Google Scholar · View at Scopus

- A. M. Mendon๺ along with a. Campilho, “Segmentation of retinal bloodstream vessels by mixing the recognition of centerlines and morphological renovation,” IEEE Transactions on Medical Imaging. vol. 25, no. 9, pp. 1200–1213, 2006. View at Writer · View at Google Scholar · View at Scopus

- Y. Boykov, O. Veksler, and R. Zabih, “Fast approximate energy minimization via graph cuts,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 23, no. 11, pp. 1222–1239, 2001. View at Writer · View at Google Scholar · View at Scopus

- Y. Boykov and V. Kolmogorov, “An experimental comparison of mincut/max-flow algorithms for energy minimization in vision,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 26, no. 9, pp. 1124–1137, 2004. View at Writer · View at Google Scholar

- Y. Boykov and G. Funka-Lea, “Graph cuts and efficient N-D image segmentation,” Worldwide Journal laptop or computer Vision. vol. 70, no. 2, pp. 109–131, 2006. View at Writer · View at Google Scholar · View at Scopus

- X. Chen, JKay. Udupa, U. Bagci, Y. Zhuge, and J. Yao, “Medical image segmentation by mixing graph cuts and oriented active appearance models,” IEEE Transactions on Image Processing. vol. 21, no. 4, pp. 2035–2046, 2012. View at Writer · View at Google Scholar

- D. Freedman and T. Zhang, “Interactive graph cut based segmentation with shape priors,” in Proceedings from the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR ’05). pp. 755–762, June 2005. View at Writer · View at Google Scholar · View at Scopus

- X. Chen, M. Niemeijer, L. Zhang, K. Lee, M. D. Abramoff, and M. Sonka, “Three-dimensional segmentation of fluid-connected abnormalities in retinal March: probability restricted graph-search-graph-cut,” IEEE Transaction on Medical Imaging. vol. 31, no. 8, pp. 1521–1531, 2012. View at Writer · View at Google Scholar

- D. Xiang, J. Tian, K. Deng, X. Zhang, F. Yang, and X. Wan, “Retinal vessel extraction by mixing radial symmetry transform and iterated graph cuts,” in Proceedings from the Annual Worldwide Conference from the IEEE Engineering in Medicine and Biology Society. pp. 3950–3953, 2011.

- A. F. Frangi, W. J. Niessen, K. L. Vincken, and M. A. Viergever, “Multiscale vessel enhancement filtering,” in Medical Imaging Computing and Computer Aided Interventions. vol. 1496 of Lecture Notes in Computer Sciences. pp. 130–137, 1998. View at Google Scholar

- A. Buades, B. Coll, and J. M. Morel, “A non-local formula for image denoising,” in Proceedings from the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR ’05). pp. 60–65, June 2005. View at Scopus

- G. Loy along with a. Zelinsky, “Fast radial symmetry for discovering sights,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 25, no. 8, pp. 959–973, 2003. View at Writer · View at Google Scholar · View at Scopus

- L. Wang, A. Bhalerao, and R. Wilson, “Analysis of retinal vasculature utilizing a multiresolution hermite model,” IEEE Transactions on Medical Imaging. vol. 26, no. 2, pp. 137–152, 2007. View at Writer · View at Google Scholar · View at Scopus

- Y. Li, J. Sun, C. Tang, and H.-Y. Shum, “Lazy snapping,” ACM Transactions on Graphics. vol. 23, no. 3, pp. 303–308, 2004. View at Writer · View at Google Scholar

Retinal Image Graph-Cut Segmentation Formula Using Multiscale Hessian-Enhancement-Based Nonlocal Mean Filter

1 Suzhou Institute of Biomedical Engineering and Technology, Chinese Academy of Sciences, Suzhou 215163, China

2 Department of Ophthalmology, The Very First Affiliated Hospital of Soochow College, Suzhou 215006, China

3 Institute of Automation, Chinese Academy of Sciences, Beijing 100190, China

Received 4 The month of january 2013 Recognized 25 March 2013

Academic Editor: Yujie Lu

2013 Jian Zheng et al. It is really an open access article distributed underneath the Creative Commons Attribution License. which allows unrestricted use, distribution, and reproduction in almost any medium, provided the initial jobs are correctly reported.

We advise a brand new approach to enhance and extract the retinal vessels. First, we use a multiscale Hessian-based filter to compute the utmost response of vessel likeness function for every pixel. With this step, bloodstream vessels of various widths are considerably enhanced. Then, we adopt a nonlocal mean filter to suppress the noise of enhanced image and keep the vessel information simultaneously. Next, a radial gradient symmetry transformation is adopted to suppress the nonvessel structures. Finally, a precise graph-cut segmentation step is conducted using caused by previous symmetry transformation being an initial. We test the suggested approach around the openly available databases: DRIVE. The experimental results reveal that our technique is very efficient.

The retina may be the only tissue in body that the data of circulation system could be directly acquired in vivo. The data of retinal vessel plays a huge role within the treatment and diagnosis of numerous illnesses for example glaucoma [1 ], age-related macular degeneration [2 ], degenerative myopia, and diabetic retinopathy [3 ]. Lately, it’s also discovered that the recognition of vascular geometric change may be significant to evaluate whether individuals have high bloodstream pressure or coronary disease [4 ]. Retinal vessel extraction is very required for ophthalmologists to identify various eye illnesses. Furthermore, precisely segmented vessels is quite useful for feature-based retinal image registration.

Generally, existing retinal vessel segmentation methods could be roughly split into two groups. The very first category is supervised learning-based method. Such methods require tremendous manual segmentations of vasculature to coach the classifier. Staal et al. [5 ] first adopt a ridge-extraction approach to separate the look into numerous patches. For every pixel within the patch, feature vector which contains profile information for example width, height, and edge strength is computed. Feature vectors will be classified utilizing a k nearest neighbors classifier with consecutive forward feature selection strategy. Soares et al. [6 ] selected pixel value and multiscale 2D Gabor wavelet coefficients to create the feature vector. A Bayesian classifier according to class-conditional probability density functions was utilized to carry out a fast classification and model complex decision surfaces. Marn et al. [7 ] suggested a brand new supervised approach to segment retinal vessels. For every pixel, 5 grey-level descriptors and a pair of moment invariants-based options that come with a squared window were computed because the feature vector. A multilayer neural network plan ended up being adopted to classify each pixel. The performance of these method depends upon the correlation between training data and test data. If both of these datasets are very different, the segmentation results might be under ideal. On top of that, the manual segmentation step will bring additional burden to ophthalmologists. Therefore, such methods haven’t been broadly utilized in clinic yet.

The 2nd category is rule-based method. Such methods usually have to extract neighborhood information of every pixel. The details are then accustomed to label the pixel based on some f preset rules. Matched filter [8 ], model-based method [9 ], and morphology-based method [10 ] all fit in with this category. Within the literature [8 ], Sofka and Stewart suggested a multiscale Gaussian and Gaussian-derivative profile kernel to identify vessels at a number of widths. Lam et al. [9 ] suggested a multiconcavity modeling method of handle unhealthy retinal images. In Lam’s approach, a lineshape concavity measure was utilized to get rid of dark lesions along with a in your area normalized concavity measure is made to remove spherical intensity variation. The 2 concavity measures are used together based on their record distributions to identify vessels generally retinal images. Mendona and Campilho [10 ] suggested an area growing formula within the morphological processed image to extract vascular centerline. First, four-direction-based differential operator was utilized to identify vascular centerlines. These centerlines were then merged to accomplish vascular centerlines and selected as seed points. Finally, region growing step was performed to rebuild the vasculature. These techniques don’t require training steps and also the interactions with doctors will also be minimized thus rule-based methods happen to be broadly utilized in clinical application. However, ideal automatic retinal vessel segmentation continues to be difficult. This could mainly be related to 2 reasons. One would be that the contrast of some small vessels might be very reasonable, particularly in some pathologies affected images. Another reason would be that the image noise for example edge blurring may disturb the ultimate segmentation results.

Lately, graph-cut methods [11 –15 ] happen to be extremely popular in image segmentation. It is because graph-cut methods could achieve the worldwide optimal worth of the predefined energy function. On top of that, the consumer interactions of these methods will also be quite simple. Several graph-cut-based methods happen to be suggested to resolve retina image segmentations. Chen et al. [16 ] suggested a 3D graph-search-graph-cut approach to segment multilayers of 3D March retinal images. The multi-layers of retina and also the symptomatic exudate-connected derangements (SEAD) are effectively segmented. Inspired through the superior performance of graph-cut method, we advise a brand new approach to extract the bloodstream vessels in retinal images following our previous work [17 ]. First, we execute a novel multiscale Hessian-based filter to compute the utmost response of vessel likeness function for every pixel, which is often used to boost the bloodstream vessels of grey retinal images. Then, we adopt a nonlocal mean filter to suppress the noise from the enhanced image. Next, a radial gradient symmetry transformation is adopted to enhance the recognition of vessel structures and suppress the nonvessel structures. Finally, a precise graph-cut segmentation is conducted using previous symmetry transformation being an initial.

To be able to enhance the contrast of retinal vasculature with various widths, we advise a multiscale Hessian-based enhancement. Frangi et al. [18 ] have suggested a means to identify the tubular structure in line with the eigenvalues of Hessian matrix. We denote by

the eigenvalues of scale-related Hessian matrix, which is understood to be follows:

where’s an experiential parameter so we place it to

The part worth of signifies the saliency of tubular structure for every pixel. We search within the scale range

to obtain the maximum response of vessel likeness function. Figure 2 shows the perfect scale property from the input image. The pixel value means the size that’s akin to the maximal function worth of. As seen from Figure 2. the positive correlation between vessel width and optimal scale is apparent. The main vessel owns a sizable scale property as the small vessel owns a little scale property.

Figure 1: A good example of a port retinal image, downloaded from openly available databases: DRIVE (world wide web.isi.uu.nl/Research/Databases/DRIVE/ ).

Figure 2: A good example of the size picture of Figure 1 the pixel value means the size that’s akin to the maximal function worth of .

Figure 3 gives a good example of multiscale Hessian-based enhancement, where the pixel value means the vessel likeness function value. As proven in Figure 3. the entire retinal vasculature is conspicuously enhanced. However, some nonvessel structures will also be enhanced including optic disk, yellow spots, and speckles. These nonvessel structures have to be removed within the following step.

Figure 3: A good example of the multiscale Hessian-based enhancement of Figure 1 the pixel value means the vessel likeness function value.

The result of multiscale hessian based enhancement is apparent. As proven in Figure 3. the entire vasculature is considerably strengthened. However, some nonvessel structures will also be enhanced and make the enhanced image to become noisy. To be able to suppress image noise and keep the structural information, we use a nonlocal mean filtering [19 ] step. We denote the improved image as and also the filtered image as

The detailed filtering step can be defined as within the following equation:

where denotes the filtering neighborhood and it is the weighting factor, which is understood to be follow:

where denotes an element vector which consists of the pixel values from the nearby area surrounding pixel and it is a filtering parameter. The parameters of filtering neighborhood and nearby area have to be selected suitably in order to acquire a balance between filtering performance and computation cost. Figure 4 shows a direct result nonlocal mean filtering. We are able to discover that the noise of enhanced image is covered up effectively as the vasculature is maintained simultaneously.

Figure 4: An creation of the nonlocal mean filter of Figure 3. where the image noise is effectively covered up as the vasculature is maintained.

To be able to take away the nonvessel structures, we advise a radial gradient symmetry transform method according to Loy’s work [20 ]. A perfect vessel structure is proven in Figure 5. As proven in Figure 5. we observe that the gradient vectors possess a symmetric property both in the magnitude and direction. For individuals nonvessel structures, there’s no such property. Therefore, we advise a symmetric vessel likeness work as follows:

where means a vessel likeness purpose of confirmed point across the direction of gradient vector and it is an indication function that signifies whether point owns the gradient symmetric property. The computation of and includes the next steps. (1) For every pixel within the filtered image as proven in Figure 4. we compute its vessel contribution across the gradient direction. The normalized gradient vector is denoted by

The coordinates of pixels that are influenced by pixel

are computed the following:

and it is the perfect scale parameter of pixel. as proven in Figure 2. (2) Of these affected pixels. we compute the vessel accumulation image

through the following equation:

(3) Then your is offered by

where’s a normalization factor and it is a radial strictness parameter. (4) For every pixel. we search in the neighborhood across the gradient direction with. As proven in Figure 5. if there are points which satisfy the following condition. otherwise.

(5) For symmetric vessel likeness function. we set a vessel likeness threshold

to extract a rough vasculature. Next, we take an erosion step to obtain a narrowed retinal vasculature. Then we compute the pixel quantity of connected vessels. When the pixel number is smaller sized compared to preset threshold. we see it as the backdrop noise and it ought to be removed.

Figure 5: A good example of radial gradient symmetry transform we are able to discover that the pixel within the vessel area is usually located between two symmetric gradient vectors.

Through the previous processing steps, we’ve got a coarsely extracted vasculature. The ultimate step would be to precisely segment vessels from previous results. This can be defined as a pixel labeling problem which may be formulated while using energy function:

where’s a labeling set and

may be the data prior energy, which measures the price of giving a label to some given pixel based on prior information.

may be the potential energy, which measures the level of smoothness of the neighboring pixel system and it is a weighting parameter.

We adopt the graph-cut formula to optimize the power function (12 ). The centerline can be used as shape just before advice the extraction process. Within our framework, a graph

is produced with nodes akin to pixels of the retinal image, where’s the group of all nodes and it is the group of all links connecting neighboring nodes. The neighboring pixel product is built with eight neighboring pixels. The terminal nodes are understood to be source

and sink. Being an initial, we extract the centerline of formerly extracted vasculature. The pixels around the centerline are thought as definite foreground

and also the pixels with

are called candidate foreground. The pixels with are called background

yet others are the candidate background. That’s,

For every pixel. we compute its minimum distances to and based on the literature [22 ], that are denoted as and. correspondingly. The price of t -links could be computed the following:

where. and. The nodes in and therefore are certainly called and. correspondingly. The load of n -link describes the labeling coherence of the pixel using its neighbors. We make use of the pixel value information because the neighborhood penalty item. The price of n -links can be explained as follows:

where’s a small number to prevent division by . Once the graph-cut formula terminates, we encourage candidate foreground pixels to become called foreground and discourage candidate background to become considered background pixels.

We test our method around the openly available databases: DRIVE [5 ]. Three measures sensitivity (SE), specificity (SP), and precision (AC) are utilized to assess the performance in our method within the image field of view. They’re understood to be follows:

where’s the amount of properly classified vessel pixels and it is the amount of the vessel pixels in ground truth. is the amount of properly classified nonvessel pixels and it is the amount of nonvessel pixels. may be the final amount of properly classified pixels and it is final amount of pixels.

We test the suggested method on 40 images and compare it using the methods produced by Staal et al. [5 ], Mendona and Campilho [10 ], Wang et al. [21 ], and Marn et al. [7 ]. The mean values and standard deviations (sd.) of those methods are proven in Table 1. Some values that can’t be acquired in the literature are denoted by NA.

Table 1: Comparison of various segmentation methods.

From Table 1 we are able to observe that there’s a leading improvement in sensitivity. Which means that our technique is very efficient in removing some small vessels. However, our method isn’t as along with others’ both in specificity and precision. For clinical application, the precision ought to be much better than 90%. Our technique is within the qualified standard, but you may still find large enhancements have to be done.

A few of the extraction answers are proven in Figures 6 and seven. As possible seen from Figure 6. a lot of the vessels within the retina could be finely extracted however, some nonvessel structures will also be extracted and a few small vessels aren’t properly segmented. This really is due to the fact our method focused more about small vessel extraction, which isn’t simple for ophthalmologists to extract by hand. Therefore some limitations between retinal region and also the background are mistakenly acknowledged as vessels. On top of that, the optic disk also can’t be fully excluded in the end result. Figure 7 shows two segmentation outcomes of ill-conditioned retinal images, where some speckles are difficult to become differentiated from small vessels. Our method does not extract a precise vasculature, in which the AC values are .8720 and .8814, correspondingly. In addition, we exchange an order of nonlocal mean filtering and multiscale hessian-based enhancement. Experimental results reveal that there’s just a little distinction between the performances. This signifies that nonlocal mean filter is extremely robust and is broadly utilized in image processing area.

Figure 6: A good example of 3 extraction results: the very first row shows 3 input retinal images and also the second row shows the segment outcomes of the retinal vessels.

Figure 7: Two segmentation outcomes of ill-conditioned retinal images the speckles help reduce the performance in our formula.

In conclusion, this paper first presents a multiscale hessian-based enhancement for retinal images. Next, we adopt a highly effective nonlocal mean filtering key to suppress noise from the enhanced image. Then, we advise a radial gradient symmetry transform approach to suppress the nonvessel artifacts. Finally, a graph-cut step is come to precisely segment the retinal vessels. Experiments reveal that our technique is very sensitive for that vessels segmentation, however the performance for many small vessel extraction and speckles exclusion continues to be must be improved. This is our further work. We’ll make further studies to enhance the performance.

The work is supported partly through the Natural Science First step toward Jiangsu Province (Grant no. BK2011331), National Science First step toward China (Grant no. 61201117), Special Funded Program on National Key Scientific Instruments and Equipment Development (Grant no. 2011YQ040082), and also the second Phase Major Project of Suzhou Institute of Biomedical Engineering and Technology (Grant no. Y053011305).

- C. Sanchez-Galeana, C. Bowd, E. Z. Blumenthal, P. A. Gokhale, L. M. Zangwill, and R. N. Weinreb, “Using optical imaging summary data to identify glaucoma,” Ophthalmology. vol. 108, no. 10, pp. 1812–1818, 2001. View at Writer · View at Google Scholar

- S. Wang, L. Xu, Y. Wang, Y. Wang, and J. B. Jonas, “Retinal vessel diameter in normal and glaucomatous eyes: the Beijing eye study,” Clinical and Experimental Ophthalmology. vol. 35, no. 9, pp. 800–807, 2007. View at Writer · View at Google Scholar · View at Scopus

- G. Garhr, C. Zawinka, H. Resch, P. Kothy, L. Schmetterer, and G. T. Dorner, “Reduced response of retinal vessel diameters to flicker stimulation in patients with diabetes,” British Journal of Ophthalmology. vol. 88, no. 7, pp. 887–891, 2004. View at Writer · View at Google Scholar · View at Scopus

- H. Yatsuya, A. R. Folsom, T. Y. Wong, R. Klein, B. EKay. Klein, along with a. R. Sharrett, “Retinal microvascular abnormalities and chance of lacunar stroke: Coronary artery disease Risk in Communities Study,” Stroke. vol. 41, no. 7, pp. 1349–1355, 2010. View at Writer · View at Google Scholar · View at Scopus

- J. Staal, M. Abràmoff, M. Niemeijer, M. A. Viergever, and B. van Ginneken, “Ridge-based vessel segmentation colored pictures of the retina,” IEEE Transactions on Medical Imaging. vol. 23, no. 4, pp. 501–509, 2004. View at Writer · View at Google Scholar

- J. V. B. Soares, J. J. G. Leandro, R. M. Cesar Junior. H. F. Jelinek, and M. J. Cree, “Retinal vessel segmentation while using 2-D Gabor wavelet and supervised classification,” IEEE Transactions on Medical Imaging. vol. 25, no. 9, pp. 1214–1222, 2006. View at Writer · View at Google Scholar · View at Scopus

- D. Marlyín, A. Aquino, M. E. Gegúndez-Arias, and J. M. Bravo, “A new supervised way of circulation system segmentation in retinal images by utilizing grey-level and moment invariants-based features,” IEEE Transactions on Medical Imaging. vol. 30, no. 1, pp. 146–158, 2011. View at Writer · View at Google Scholar · View at Scopus

- M. Sofka and C. V. Stewart, “Retinal vessel centerline extraction using multiscale matched filters, confidence and edge measures,” IEEE Transactions on Medical Imaging. vol. 25, no. 12, pp. 1531–1546, 2006. View at Writer · View at Google Scholar · View at Scopus

- B. S. Y. Lam, Y. Gao, along with a. W. C. Liew, “General retinal vessel segmentation using regularization-based multiconcavity modeling,” IEEE Transactions on Medical Imaging. vol. 29, no. 7, pp. 1369–1381, 2010. View at Writer · View at Google Scholar · View at Scopus

- A. M. Mendon๺ along with a. Campilho, “Segmentation of retinal bloodstream vessels by mixing the recognition of centerlines and morphological renovation,” IEEE Transactions on Medical Imaging. vol. 25, no. 9, pp. 1200–1213, 2006. View at Writer · View at Google Scholar · View at Scopus

- Y. Boykov, O. Veksler, and R. Zabih, “Fast approximate energy minimization via graph cuts,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 23, no. 11, pp. 1222–1239, 2001. View at Writer · View at Google Scholar · View at Scopus

- Y. Boykov and V. Kolmogorov, “An experimental comparison of mincut/max-flow algorithms for energy minimization in vision,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 26, no. 9, pp. 1124–1137, 2004. View at Writer · View at Google Scholar

- Y. Boykov and G. Funka-Lea, “Graph cuts and efficient N-D image segmentation,” Worldwide Journal laptop or computer Vision. vol. 70, no. 2, pp. 109–131, 2006. View at Writer · View at Google Scholar · View at Scopus

- X. Chen, JKay. Udupa, U. Bagci, Y. Zhuge, and J. Yao, “Medical image segmentation by mixing graph cuts and oriented active appearance models,” IEEE Transactions on Image Processing. vol. 21, no. 4, pp. 2035–2046, 2012. View at Writer · View at Google Scholar

- D. Freedman and T. Zhang, “Interactive graph cut based segmentation with shape priors,” in Proceedings from the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR ’05). pp. 755–762, June 2005. View at Writer · View at Google Scholar · View at Scopus

- X. Chen, M. Niemeijer, L. Zhang, K. Lee, M. D. Abramoff, and M. Sonka, “Three-dimensional segmentation of fluid-connected abnormalities in retinal March: probability restricted graph-search-graph-cut,” IEEE Transaction on Medical Imaging. vol. 31, no. 8, pp. 1521–1531, 2012. View at Writer · View at Google Scholar

- D. Xiang, J. Tian, K. Deng, X. Zhang, F. Yang, and X. Wan, “Retinal vessel extraction by mixing radial symmetry transform and iterated graph cuts,” in Proceedings from the Annual Worldwide Conference from the IEEE Engineering in Medicine and Biology Society. pp. 3950–3953, 2011.

- A. F. Frangi, W. J. Niessen, K. L. Vincken, and M. A. Viergever, “Multiscale vessel enhancement filtering,” in Medical Imaging Computing and Computer Aided Interventions. vol. 1496 of Lecture Notes in Computer Sciences. pp. 130–137, 1998. View at Google Scholar

- A. Buades, B. Coll, and J. M. Morel, “A non-local formula for image denoising,” in Proceedings from the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR ’05). pp. 60–65, June 2005. View at Scopus

- G. Loy along with a. Zelinsky, “Fast radial symmetry for discovering sights,” IEEE Transactions on Pattern Analysis and Machine Intelligence. vol. 25, no. 8, pp. 959–973, 2003. View at Writer · View at Google Scholar · View at Scopus

- L. Wang, A. Bhalerao, and R. Wilson, “Analysis of retinal vasculature utilizing a multiresolution hermite model,” IEEE Transactions on Medical Imaging. vol. 26, no. 2, pp. 137–152, 2007. View at Writer · View at Google Scholar · View at Scopus

- Y. Li, J. Sun, C. Tang, and H.-Y. Shum, “Lazy snapping,” ACM Transactions on Graphics. vol. 23, no. 3, pp. 303–308, 2004. View at Writer · View at Google Scholar

Public space architecture thesis proposal titles

Public space architecture thesis proposal titles Mb ofdm uwb thesis writing

Mb ofdm uwb thesis writing Introduction to thesis writing ppt for kids

Introduction to thesis writing ppt for kids Coming home again chang rae lee thesis proposal

Coming home again chang rae lee thesis proposal Aircraft equation of motion thesis writing

Aircraft equation of motion thesis writing