The 14th-century maxim referred to as Ockham’s Razor, paraphrased by Jefferys and Berger (1992) as “It’s vain connected with increased your skill with less”, is generally put on the interpretation of scientific results. However, it applies too to selection of analysis. Thus if someone features a simple environmental data set, comprised of number of species and number of samples, ordination isn’t useful. In this case, the information are easiest to interpret within the simple table.

Within the typical data set, however, there’s additionally a many species and samples. Fat loss for the human mind to concurrently contemplate lots of dimensions. The aim of ordination should be to maintain your implementation of Ockham’s Razor: a couple of dimension now’s simpler to know than many dimensions. A great ordination technique can determine the important thing dimensions (or gradients) within the data set, and ignore “noise” or chance variation.

Both indirect and direct gradient analysis potentially need to lessen the dimensionality in the data set. However, decrease in dimensionality isn’t the just use ordination. Before the introduction of CCA, most broadly-used ordination techniques were indirect, along with the primary reason for ordination was considered “exploratory” (Gauch 1982). It had been the task within the ecologist to make use of their understanding and intuition to gather and interpret data pure objectivity might interfere obtaining the chance to differentiate important gradients. Ordination was frequently considered as much a skill as being a science.

Once CCA was available, multivariate direct gradient analysis elevated to obtain achievable.

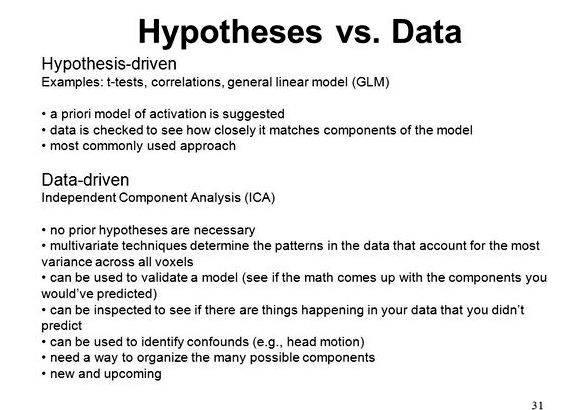

It elevated to obtain easy to rigorously test record ideas and exceed mere “exploratory” analysis. However, testing ideas requires complete objectivity, which leads to repeatability and falsifiability. The 2 fundamental motivations for multivariate direct gradient analysis, hypothesis testing and exploratory analysis, conflict with one another to some degree:

Table 1. Hypothesis-driven analysis, exploratory analysis, additionally for their major characteristics and motivations. This table pertains to regression techniques and indirect gradient analysis in addition to CCA.

Motivating Question: “May I reject the null hypothesis that species are unrelated having a postulated ecological factor or factors?”

Motivating Question: “How can you optimally explain or describe variation within my data set?”

sites needs to be associated with world: random, stratified random, regular placement

sites may be “experienced” or subjectively located

analyses needs to be planned a priori

“data diving” allowable publish-hoc analyses, explanations, ideas OK

p -values significant

p – values only a tough guide

stepwise techniques not valid without mix-validation

stepwise techniques (e.g. forward selection) valid and helpful.

To perform a hypothesis-driven analysis, you have to be very specific regarding the analyses one really wants to perform. The null hypothesis needs to be clearly stated, along with the data needs to be collected within the repeatable manner. Usually, the sampling design requires random, stratified random, or regular distribution of study plots.

If there is any subjectivity connected with locating or orienting study plots, the outcome are technically not valid. All the analyses, including variations of understanding transformation and make use of of several ordination options (e.g. detrending otherwise), needs to be planned ahead of time, otherwise the client runs the chance of “data diving” or “data mining”, i.e. getting an artificially significant result because so many choices attempted. Stepwise techniques (discussed later) are automated types of “data diving”, and could typically also result in incorrect record inference (High high high cliff 1987, Draper and Cruz 1981). The reward for rigorously staying with individuals rather stringent criteria may be the record inference (i.e. the p -value) applies.

Exploratory analyses might lack record rigor, but they’re still a mainstay of plant existence research. The aim of exploratory analysis would be to uncover pattern anyway, that’s an inherently subjective enterprise. Exploratory analyses incorporate the understanding, skill, and intuition within the investigator towards the experiment. Unless of course obviously clearly you’ll find another investigator with identical understanding, skill and intuition, the analyses aren’t strictly repeatable, and they are hence not falsifiable. When you are capable of doing exploratory analyses on sample plots located based on an extensive, objective sampling design, such careful placement is not needed. Indeed, an exploratory analysis may be aided when the investigator subjectively places study plots in locations they views to obtain important or interesting. Orienting plots within plant existence which seems homogeneous is extremely subjective, but very helpful in evaluating variations between plots.

With exploratory analysis, “data diving” (e.g. using different transformations of species abundances, modifying ordination options, selecting different subsets of ecological variables, or selecting different subsets of study plots) is not to obtain prevented. Rather, it’s a method of the investigator for more information on the information set. Stepwise analysis is a kind of automated data diving. It’s helpful as being a tool to assist uncover “important” or “interesting” variables.

Ecologists are frequently mislead into believing that p -values from stepwise methods possess a rigorous meaning, the link between stepwise methods supply the perfect model. Such thinking is fake.

You are able to combine exploratory analysis and hypothesis-driven analysis in a bigger study. A way of transporting this out would be to perform a 2-phase study, where the first phase is unquestionably an exploratory analysis, possibly involving subjectively located plots and employing many variations on analysis. The patterns found in the first phase will probably be posed as recommendations for that second phase. The 2nd phase necessitates the plethora of fresh data from fairly located plots, along with an entirely planned data analysis.

A different way to mix the 2 major kinds of analysis is thru data set subdivision. The information set reaches random separated into two subsets: an exploratory subset along with a confirmatory subset (alternatively known as model building and model validation. correspondingly). Many, varied analyses might be transported out across the exploratory subset (including stepwise analysis) – and so forth analyses depends upon intuition, hunches, or superstition. If interesting patterns are available regarding particular ecological variables, and utilizing particular data transformations, these patterns may be statistically tested when using the confirmatory subset. To make use of data set subdivision correctly, samples needs to be fairly located.

Literature reported

High high high cliff, N. 1987. Analyzing Multivariate Data. Harcourt Brace Jovanovich, Publishers, Hillcrest, California.

Draper, N. R. and H. Cruz. 1981. Applied Regression Analysis. second edition. Wiley, New You can.

Gauch, H. G. Junior. 1982. Multivariate Analysis and Community Structure. Cambridge College Press, Cambridge.

Hallgren, E. M. W. Palmer, and P. Milberg. 1999. Data diving with mix validation: an analysis of broad-scale gradients in Swedish weed communities. Journal of Ecosystem 87 :1037-1051.

Jefferys, W. H. and J. O. Berger. 1992. Ockham’s Razor and Bayesian Analysis. Am. Sci. 80 :64-72.

Vg wort elektronische dissertation proposal

Vg wort elektronische dissertation proposal Les mots de liaison dans une dissertation proposal

Les mots de liaison dans une dissertation proposal Sample thesis proposal for it students skills

Sample thesis proposal for it students skills Master thesis proposal sample ppt file

Master thesis proposal sample ppt file Electrical resistance tomography thesis proposal

Electrical resistance tomography thesis proposal